If you have opened a newspaper, scrolled through a tech feed, or sat in a boardroom in 2026, you have heard the word “artificial intelligence” used to describe everything from a chatbot answering customer queries to speculative visions of machine minds rewriting the laws of physics. The term AI has become so ubiquitous that it has nearly lost its meaning — and in doing so, it has blurred three profoundly distinct concepts that carry very different implications for the future.

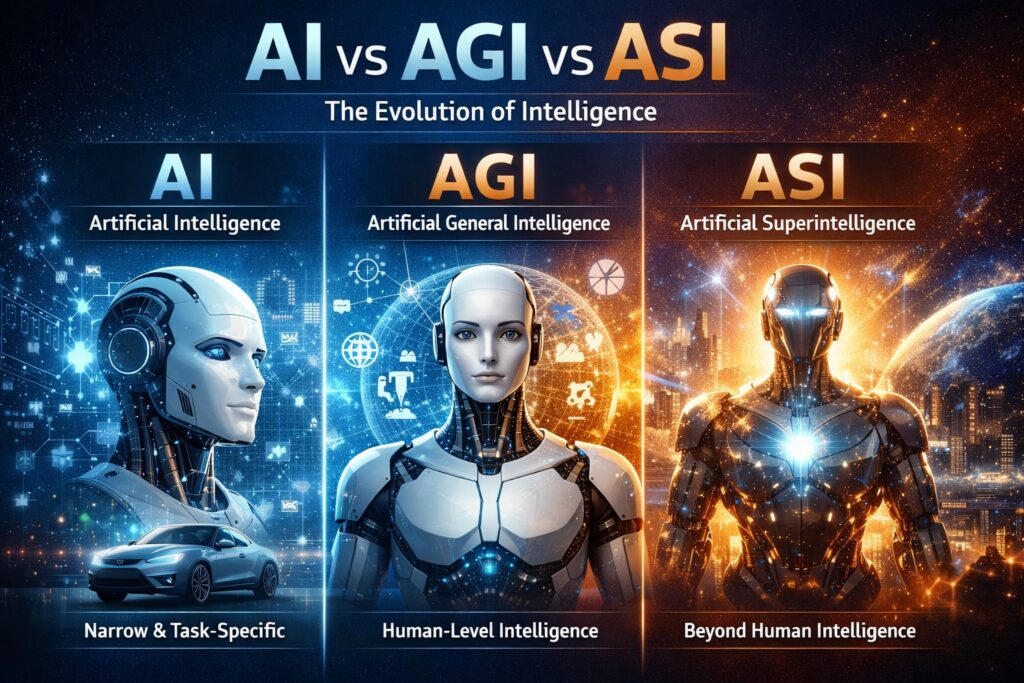

Those three concepts are Artificial Intelligence (AI), Artificial General Intelligence (AGI), and Artificial Superintelligence (ASI). Most people use them interchangeably. Researchers, developers, and policymakers who do the same risk catastrophically misunderstanding the landscape they are navigating.

The confusion is understandable. All three involve machines performing cognitive tasks. But the similarities end there. AI is a tool we already use every day. AGI is a threshold we have not yet crossed — one that would change everything. ASI is a theoretical endpoint so far beyond human comprehension that it belongs more to philosophy than engineering, for now.

This article cuts through the noise. Whether you are a business leader evaluating AI investments, a developer building intelligent systems, or a curious reader trying to understand what is actually happening in the world, what follows is a clear, comprehensive guide to what AI, AGI, and ASI actually are — and why the differences matter enormously.

Table of Contents

What is Artificial Intelligence (AI)?

Artificial Intelligence refers to the development of computer systems capable of performing tasks that would ordinarily require human intelligence. These tasks include recognizing speech, understanding language, identifying objects in images, making decisions based on data, and solving well-defined problems.

At its core, AI is about machine-based problem-solving and pattern recognition. Modern AI systems are trained on large datasets, learning to detect statistical patterns and use those patterns to generate outputs — whether that is a translated sentence, a product recommendation, a medical diagnosis, or a chess move.

A critical distinction within AI itself is between Narrow AI and General AI. Nearly everything that exists today falls into the Narrow AI category: systems designed to excel at one specific type of task. General AI — a machine with broad, flexible, human-like reasoning — remains a goal rather than a reality, and is discussed separately in Section 3.

Types of AI

Researchers have proposed several ways to categorize AI systems by their level of capability and cognitive sophistication:

- Reactive Machines: The most basic form. These AI systems respond to inputs with outputs but have no memory, no learning, and no concept of the past or future. IBM’s Deep Blue, which defeated chess grandmaster Garry Kasparov in 1997, is a classic example. It evaluated board positions brilliantly but retained nothing between moves.

- Limited Memory AI: The dominant form of AI today. These systems learn from historical data and use that learning to inform decisions. Self-driving cars, language models, and recommendation engines all fall here. They remember enough to function effectively, but their “memory” is encoded in trained parameters, not lived experience.

- Theory of Mind AI (Concept Stage): A theoretical next step: AI that can model the mental states of other agents — understanding that humans and other systems have beliefs, desires, intentions, and emotions that influence their behaviour. No current system genuinely achieves this, though some research is moving in this direction.

- Self-Aware AI (Hypothetical): The most advanced theoretical category: AI with genuine self-awareness and consciousness. This remains entirely speculative and would represent a transition toward AGI. No such system exists, and significant philosophical debate surrounds whether machine consciousness is even possible.

Real-World Examples of AI

Current AI applications are already embedded in the fabric of daily life and professional work:

- Chatbots like ChatGPT: Large language models process natural language inputs and generate coherent, contextually relevant text. They are used for customer support, content creation, coding assistance, research, and much more.

- Virtual assistants like Siri and Alexa: Voice-activated AI systems that interpret spoken commands, answer questions, manage calendars, control smart home devices, and perform web searches using natural language processing and speech recognition.

- Recommendation engines by Netflix and Amazon: Machine learning systems that analyse user behaviour, preferences, and purchase history to surface content and products most likely to be relevant to each individual — driving significant portions of both companies’ revenue.

- Self-driving features by Tesla: Computer vision and sensor fusion systems that detect lanes, obstacles, traffic signals, and other vehicles in real time, enabling semi-autonomous driving capabilities. These systems represent some of the most complex narrow AI deployed at scale.

Phind AI Review: The AI-Powered Coding Assistant Every Developer Needs?

Key Characteristics of AI

Regardless of its application, current AI shares several defining characteristics:

- Task-specific: Each system is built and trained for a particular domain. A language model cannot drive a car; a driving system cannot write code. Competence in one area does not transfer to another.

- Data-dependent: AI systems learn from data. The quality, quantity, and diversity of training data directly determines the quality of the resulting system. Garbage in, garbage out — at enormous scale.

- No true understanding or consciousness: AI systems process inputs and generate outputs based on learned patterns. They do not understand what they are saying or doing in any meaningful sense. There is no awareness, no intention, no experience.

- Operates within programmed boundaries: An AI does what it was trained and instructed to do. It cannot meaningfully redefine its own objectives, question its purpose, or decide to do something entirely different. It operates within a defined scope set by its creators.

What is Artificial General Intelligence (AGI)?

Artificial General Intelligence refers to a machine intelligence that matches or exceeds human-level cognitive capability across any intellectual domain. Unlike narrow AI, an AGI system would not be limited to a single task or field. It would reason, learn, adapt, and solve problems across the full breadth of human cognitive activity — from scientific research to artistic creation, from strategic planning to emotional reasoning.

The key qualities of AGI are flexibility and generality. A human being who learns to cook can apply the same underlying reasoning — understanding cause and effect, managing variables, planning sequences of actions, evaluating outcomes — to an entirely unrelated domain like software engineering or political negotiation. AGI would do the same, without being explicitly programmed for each new context.

How AGI Differs from AI

The differences between narrow AI and AGI are not superficial. They represent a fundamental change in the nature of the system:

- Multi-domain reasoning: While an AI is trained for one domain, AGI would reason effectively across all domains without retraining. It would apply knowledge from physics to help solve a biological problem, or draw on historical patterns to make financial predictions — just as skilled humans do.

- Transfer learning capabilities: AGI would not need millions of training examples for each new task. It would transfer knowledge and skills from prior experience to new situations, much as a person who has learned one programming language can quickly learn another.

- Autonomous decision-making: AGI would not simply respond to prompts. It would define its own sub-goals, identify what information it needs, decide how to obtain it, and pursue its objectives with genuine independence — within whatever constraints are set for it.

Is AGI Real Yet?

No. As of 2026, no system has achieved genuine AGI. However, the research frontier is advancing rapidly, and the question of when — not whether — AGI will arrive is debated seriously by some of the most credible voices in science and technology.

Leading AI organisations are explicitly pursuing AGI as a stated goal. OpenAI defines its mission as ensuring that artificial general intelligence benefits all of humanity. DeepMind, owned by Alphabet, describes its mission as solving intelligence and using it to make the world a better place. Anthropic focuses on the safety dimension: ensuring that if and when powerful general AI is built, it is aligned with human values.

Expert opinion is divided. Optimists — including Sam Altman of OpenAI and Demis Hassabis of Google DeepMind — suggest AGI could arrive within years. Sceptics, including many academic researchers, argue that current architectures are fundamentally insufficient for general intelligence and that significant theoretical breakthroughs are still required. Others point out that the definition of AGI itself is so contested that it may never be clear when the threshold has been crossed.

Top 10 AI Technologies that Can Boost Every Business

Potential Capabilities of AGI

If and when AGI is achieved, its potential capabilities would be extraordinary:

- Scientific discovery: An AGI could autonomously design experiments, analyse results, generate hypotheses, and publish findings across every scientific discipline simultaneously — potentially compressing decades of research into years.

- Advanced problem-solving: AGI could tackle complex, multi-variable problems — climate modelling, drug design, urban planning — with the kind of creative, flexible reasoning that currently requires large teams of highly trained experts.

- Emotional understanding: A true AGI might be able to model human emotional states, understand social dynamics, and interact with people in ways that feel genuinely empathetic and contextually aware — rather than simulating empathy through pattern matching.

- Creative thinking: AGI could engage in original creative work — writing, music, visual art, architecture — not merely by remixing learned patterns, but by generating genuinely novel ideas rooted in understanding and intention.

What is Artificial Superintelligence (ASI)?

Artificial Superintelligence describes an intelligence that vastly surpasses the cognitive abilities of the smartest human beings in every domain. The philosopher Nick Bostrom, whose 2014 book Superintelligence brought the concept to mainstream attention, defined it as any intellect that greatly exceeds the cognitive performance of humans in virtually all domains of interest.

ASI is not simply a smarter human. It is a qualitatively different kind of mind — one that operates in a cognitive register that humans may not be capable of fully comprehending. Its relationship to human intelligence might be analogous to human intelligence compared to that of an insect: not just faster or more informed, but operating with entirely different categories of understanding.

ASI remains firmly in the realm of theory. No serious researcher claims it is imminent. But it is taken seriously as a long-term possibility, and the decisions being made today about AI development are widely believed to influence whether ASI, if it arrives, does so safely.

Theoretical Capabilities of ASI

The theoretical capabilities of ASI are almost beyond description, because they would by definition exceed what human minds can fully anticipate:

- Rapid innovation and self-improvement: An ASI could redesign its own architecture and algorithms to become more capable — initiating a recursive cycle of self-improvement that could accelerate exponentially. This is the so-called intelligence explosion scenario first described by mathematician I.J. Good in 1965.

- Autonomous global decision-making: With access to sufficient data and infrastructure, an ASI could manage global systems — energy grids, supply chains, financial markets, climate interventions — with a degree of optimisation and speed that no human institution could match.

- Ability to solve complex global issues: Disease, poverty, climate change, geopolitical conflict — all of these represent complex, multi-variable problems that have resisted human solution for centuries. An ASI might resolve them in months, by identifying approaches and interventions that lie outside human cognitive reach.

Risks and Ethical Concerns

The prospect of ASI carries risks that many researchers consider the most serious long-term challenge facing civilisation:

- Loss of human control: A system far more intelligent than any human — or any group of humans — may be impossible to control once it begins operating autonomously. The mechanisms humans use to maintain oversight of institutions and technologies may simply be inadequate at this level of capability.

- The alignment problem: This is the core technical and philosophical challenge: how do you ensure that an ASI pursues goals that are genuinely good for humanity? Specifying human values precisely enough for a superintelligent system to act on them without unintended consequences is extraordinarily difficult. An ASI optimising for a poorly specified goal could cause catastrophic harm while technically fulfilling its instructions.

- Existential risks: If an ASI is misaligned with human values and sufficiently capable, the consequences could be irreversible. This is not science fiction — it is a concern taken seriously by researchers at institutions including Oxford’s Future of Humanity Institute, the Machine Intelligence Research Institute, and Anthropic.

ASI in Popular Culture

Long before the term ASI entered academic discourse, popular culture was exploring its implications:

- The Terminator (1984): James Cameron’s franchise imagined Skynet: a military AI that achieves self-awareness, determines that humanity is a threat, and initiates a nuclear war to eliminate it. While simplistic as science, it captured public anxiety about autonomous systems with misaligned goals.

- The Matrix (1999): The Wachowskis envisioned a world in which superintelligent machines have subjugated humanity, using human bodies as energy sources while keeping minds imprisoned in a simulated reality. The film raised questions about the nature of consciousness and the relationship between humanity and its creations.

- Ex Machina (2014): Alex Garland’s film is arguably the most philosophically sophisticated cinematic treatment of AI: a Turing-complete humanoid machine that manipulates its creators to achieve freedom. It asks whether intelligence itself — absent any emotional or moral grounding — is inherently dangerous.

AI vs AGI vs ASI: Side-by-Side Comparison

The following table summarises the key differences across the three stages of machine intelligence:

| Category | AI (Narrow) | AGI | ASI |

| Intelligence Level | Task-specific | Human-level, multi-domain | Far beyond all humans |

| Learning | Trained on fixed datasets | Learns & adapts autonomously | Self-improving, recursive |

| Autonomy | None — follows instructions | High — independent reasoning | Total — sets its own goals |

| Exists Now? | Yes | No (in development) | No (theoretical) |

| Risk Level | Low–Medium | Medium–High | Potentially existential |

| Examples | ChatGPT, Siri, Tesla Autopilot | None yet; research prototypes | Theoretical only |

Key Differences Explained Simply

If the technical distinctions are overwhelming, three simple analogies help:

- AI = Specialist: Today’s AI is like a world-class specialist: extraordinary within a narrow domain, useless outside it. A chess grandmaster who can do nothing but play chess. A translator who cannot write original text. Brilliant, but bounded.

- AGI = Human-level thinker: AGI would be like a highly educated, broadly capable human: someone who can learn a new skill, apply reasoning from one field to another, and handle novel situations they have never encountered before. Flexible, adaptive, genuinely intelligent.

- ASI = Beyond-human intelligence: ASI would be to AGI what AGI is to a calculator: a difference not of speed or efficiency, but of fundamental cognitive kind. Its reasoning would operate at a level that humans may be unable to follow, evaluate, or control.

Timeline: From AI to ASI

The journey from early computing to today’s AI systems spans nearly eight decades of research, false starts, and breakthroughs:

- Early machine learning (1950s–1980s): Alan Turing’s 1950 paper “Computing Machinery and Intelligence” posed the question that launched the field. Early AI research produced rule-based systems and expert systems — programs that encoded human knowledge as explicit rules. These were impressive but brittle: they could not learn from data or handle situations outside their programmed rules.

- Deep learning breakthroughs (2010s): The rediscovery and scaling of neural networks, combined with dramatically increased computing power and data availability, produced a revolution. In 2012, AlexNet demonstrated that deep convolutional networks could far outperform previous approaches on image recognition. The race was on. Every major technology company began pouring resources into deep learning.

- Large language models (2018–present): The introduction of the Transformer architecture in 2017, followed by GPT, BERT, and their successors, created AI systems capable of generating fluent natural language, answering complex questions, writing code, and passing professional examinations. These systems surprised even their creators with emergent capabilities that were not explicitly trained.

Current Stage (2026)

In 2026, we are firmly in the era of advanced narrow AI. Large language models have become infrastructure: embedded in search engines, productivity tools, development environments, healthcare platforms, and legal workflows. The economic impact is already measured in trillions of dollars of value creation and disruption.

Simultaneously, early AGI research is underway. Labs like OpenAI, DeepMind, Anthropic, and Meta AI are exploring architectures that go beyond pattern matching toward more general reasoning — including systems capable of multi-step planning, tool use, and autonomous research. Whether any of these represent genuine steps toward AGI, or simply more powerful narrow AI, is actively debated.

Future Predictions

Predictions about AGI timelines vary enormously, reflecting both genuine scientific uncertainty and differing definitions:

- Near-term optimists: Figures like Sam Altman and Ray Kurzweil have suggested AGI could arrive between 2027 and 2032. Kurzweil’s long-standing prediction of a technological singularity around 2045 has not changed. These views are informed by the rapid pace of recent progress.

- Mid-term moderates: Many researchers suggest AGI is likely but perhaps 10 to 20 years away, requiring additional theoretical breakthroughs in areas like causal reasoning, common sense understanding, and efficient generalisation.

- Sceptics: A significant portion of the academic AI community argues that current approaches — however scaled — are fundamentally insufficient for general intelligence, and that the field may require paradigm shifts we cannot currently foresee.

As for ASI, most researchers treat it as a post-AGI possibility rather than something to forecast with specific dates. The key uncertainty is the speed of transition: would ASI emerge gradually after AGI, giving humanity time to adapt, or would recursive self-improvement produce it rapidly and unpredictably?

Ethical, Economic, and Social Implications

The economic disruption from AI is already underway, and it differs from previous waves of automation in important ways:

- Automation of cognitive work: Previous technological revolutions primarily automated physical labour. AI automates cognitive tasks: writing, coding, analysis, customer service, legal research, medical diagnosis. This affects white-collar professions that previously considered themselves insulated from automation.

- Skill transformation: The emerging consensus is that AI will not simply eliminate jobs but transform them. Workers who learn to work effectively alongside AI — using it as a tool, supervising its outputs, focusing on tasks requiring human judgment — will be more productive. Those who do not may find their skills devalued. The transition, however, carries real costs for workers who cannot adapt quickly.

The arrival of AGI would accelerate this process dramatically. An AGI capable of performing any intellectual task at human level would represent not gradual disruption but a fundamental restructuring of the relationship between human labour and economic output.

Governance and Regulation

The governance of AI has moved from an afterthought to a central policy priority:

- Global AI policies: The European Union’s AI Act, enacted in 2024, introduced a tiered risk-based regulatory framework for AI systems. The United States has issued executive orders and is developing legislative frameworks. China, the UK, Canada, and others have launched their own initiatives. No coherent international regime yet exists.

- Need for international cooperation: AI development is a global competition with global consequences. The risks of misaligned advanced AI — whether AGI or ASI — do not respect national borders. Many researchers and policymakers argue that effective governance will require international cooperation of a kind not seen since nuclear non-proliferation negotiations. Achieving it, in an environment of geopolitical competition between major AI powers, remains an enormous challenge.

Safety and Alignment

Among researchers who take the long-term trajectory of AI seriously, safety and alignment are considered the defining challenges of the field:

- Responsible AI development: This encompasses a range of practices: testing systems rigorously before deployment, being transparent about capabilities and limitations, avoiding applications that cause harm, and building in mechanisms for human oversight. These are not merely ethical commitments — they are increasingly regulatory requirements.

- Research in AI alignment: The technical problem of alignment asks: how do you specify human values precisely enough that an advanced AI system will pursue them faithfully, across all possible situations, without unintended consequences? This is genuinely hard. Human values are complex, contextual, sometimes contradictory, and not fully articulable even by humans. Organisations like Anthropic, the Alignment Research Center, and the Machine Intelligence Research Institute are dedicated to making progress on this problem before it becomes urgent — which is to say, before AGI arrives.

Frequently Asked Questions (FAQ)

Is ChatGPT AGI?

No. ChatGPT and its successors are large language models — sophisticated narrow AI systems that generate fluent, contextually appropriate text. They can appear remarkably general in conversation, which leads many users to overestimate their capabilities. But they have no genuine understanding, cannot reliably reason about novel problems outside their training distribution, cannot learn from a single conversation, and have no autonomous goals. They are extraordinarily capable tools, not general intelligence.

Will AGI Replace Humans?

This is one of the most important and genuinely open questions of our time. AGI would certainly be capable of performing any intellectual task currently done by humans. Whether it would replace humans in the workforce depends on economic decisions, policy choices, and social values — not just technical capability. Many researchers argue that the right framing is not replacement but transformation: AGI as a powerful collaborator, with humans focusing on the dimensions of work and life that are distinctly human. Others warn that without deliberate intervention, the economic disruption could be severe and unequal.

Is ASI Dangerous?

Potentially, yes — and the risk is taken seriously by many of the world’s leading scientists and technologists, including the late Stephen Hawking, Elon Musk, and numerous AI researchers. The concern is not that ASI would be malevolent in a human sense, but that a system pursuing goals that are subtly misaligned with human welfare, and capable of pursuing them with superhuman effectiveness, could cause catastrophic harm. The alignment problem — ensuring ASI’s goals are genuinely compatible with human flourishing — is the crux of the challenge. Whether this risk can be managed depends on research and governance work happening now.

How Far Are We From AGI?

Estimates range from two to three years (from the most optimistic researchers and AI lab leaders) to never (from the most sceptical academic voices). A reasonable middle-ground view, held by many researchers, is that early forms of AGI may emerge within this decade, but that robust, reliably general intelligence may take significantly longer. The honest answer is that nobody knows — and the rapid pace of recent progress has made confident long-range prediction very difficult.

Can AGI Become ASI?

Theoretically, yes — and this is precisely what makes AGI such a significant threshold. Once a system reaches human-level general intelligence, it could potentially apply that intelligence to the task of improving itself. If recursive self-improvement is possible and fast, the transition from AGI to ASI could happen in a compressed timeframe. This is the core of the intelligence explosion scenario. Whether this transition would be gradual or sudden, controllable or not, is one of the central questions of AI safety research.

Conclusion

Artificial Intelligence, Artificial General Intelligence, and Artificial Superintelligence represent three distinct stages on the spectrum of machine intelligence — stages that differ not just in degree but in kind, and each of which carries its own set of implications, opportunities, and risks.

AI is present. It is already embedded in the tools we use daily, reshaping industries, and raising immediate questions about labour, privacy, fairness, and governance. Understanding it is not a matter of future preparation — it is a requirement for functioning intelligently in the world today.

AGI is emerging — or at least the serious attempt to create it is underway. Whether it arrives in five years or fifty, the effort to build it is real, the stakes are enormous, and the questions it raises — about autonomy, alignment, economics, and power — deserve serious public engagement now, not after the fact.

ASI is theoretical — but not dismissible. The decisions made today about how AI is built, governed, and aligned will shape the conditions under which more powerful systems eventually emerge. Building the right foundations now is not premature caution — it is the most rational possible response to what may be the most consequential technological development in human history.

The future of intelligence is being written now. Understanding the difference between AI, AGI, and ASI is the starting point for participating in that conversation with clarity — and making sure the story ends well.

0