According to McKinsey, over 70% of large-scale software transformations fail to meet their objectives. Another Gartner study revealed that by the end of 2025, more than 85% of AI projects delivered below expectations. Not because of bad models or wrong use cases, but because of poor architectural decisions made before a single line of model integration code was written. The tools are getting better every quarter. The systems built around them are not keeping up.

This is the gap that rarely gets discussed. Enterprises are investing heavily in AI capabilities while underinvesting in the engineering discipline that determines whether those capabilities survive contact with production. The result is a widening distance between what AI-first software looks like in a controlled environment and what it actually does when real users depend on it at scale.

That gap lives in the architecture. Here is what closing it actually requires.

Table of Contents

5 Things AI-First Software Architecture Demands from Engineering Teams

Building AI-first software is not an extension of building conventional software. It is a different problem category. One that exposes gaps in how most engineering teams think about reliability, security, and system design. These are the five demands that separate AI-first systems that hold in production from the ones that don’t.

1. Honesty About Non-Determinism

Traditional software is built on a foundational contract: same input, same output. Every testing methodology, every SLA, every quality assurance process in conventional software development rests on that assumption.

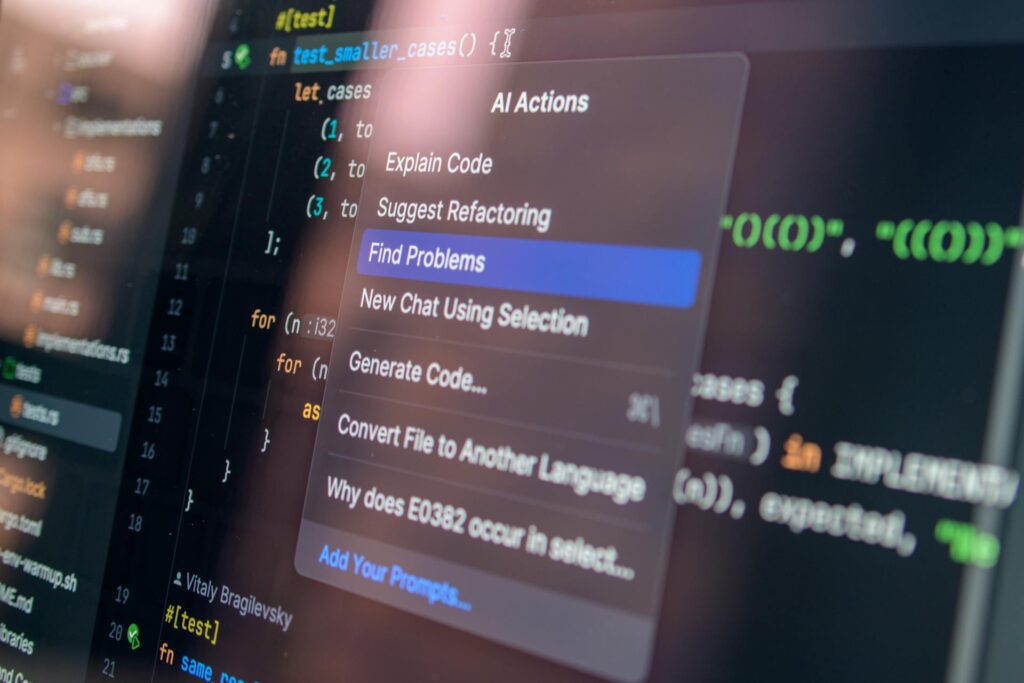

AI breaks that contract by design. A model’s response varies with context. Its confidence shifts. Its outputs drift as underlying models are updated by third-party providers, often without notice, often with no visible change in API behavior. This is not a defect. It is a characteristic. The problem is that most software architectures are designed around determinism, then retrofitted to accommodate AI, which puts the foundational assumption of the architecture in direct conflict with the foundational behavior of the system.

Designing honestly for this means explicit fallback layers, human-in-the-loop checkpoints wherever model output touches a consequential decision, and real-time instrumentation that detects degradation before users feel it. These are structural requirements. Teams that treat them as optional build systems that work until they don’t.

2. Security Designed In, Not Bolted On

Enterprise security frameworks were built around known inputs producing known outputs. AI-integrated systems expand the attack surface in ways those frameworks were not designed to handle.

Prompt injection (where malicious inputs manipulate model behavior) is not a theoretical concern. It is documented, actively exploited, and difficult to detect because the system technically behaves as designed. Data leakage through model outputs is a real vulnerability in any system where the model has access to proprietary information. Adversarial inputs can push outputs outside expected parameters and feed into downstream decisions before anyone identifies the source.

Engineering teams that build enterprise-grade and production-ready AI software handle it well by defining trust boundaries before integration begins, treating input validation and output sanitization as standard pipeline components rather than optional additions, and approaching security as a design constraint rather than a deployment checklist. SOC 2 and ISO 27001 certification, in this context, are not paperwork. They are evidence that security controls are embedded in the engineering process rather than added after delivery.

3. Full-Stack Reliability, Not Just Infrastructure Stability

In a traditional system, 99.9% uptime is largely an infrastructure problem. In an AI-integrated system, it is a fundamentally different promise.

Infrastructure can report healthy while the system quietly degrades because a third-party model API changed behavior, a data pipeline drifted, or a component started performing outside expected parameters. None of that shows up in a standard uptime dashboard.

This is a distinction that firms with genuine AI delivery experience understand well. Radixweb, an AI-first software engineering company, was recently awarded the Platinum Honor at the 2026 TITAN Business Awards in the Outstanding IT Software/System category. They built a high-availability B2B platform (99.9% uptime) that sustained performance under real operational pressure at scale.

That outcome did not come from infrastructure investment alone. It came from engineering the entire stack (data contracts, failure routing, observability design, and defined system behavior) for when dependencies stop responding as expected. The TITAN recognition, given across 5,100+ global entries, reflects exactly the kind of full-stack discipline that separates systems that hold from systems that are held together.

Most teams learn the importance of that sequence only after reversing it.

4. Data Infrastructure That Can Actually Support AI

If there is one consistent finding across failed AI implementations, it is that the model was not the problem.

In most enterprises, data is fragmented across systems that were never designed to share schemas, governed inconsistently, and maintained in ways that made sense for the systems it originally served, not for AI components that require clean, structured, well-labeled inputs at volume. Laying AI-first architecture on top of that foundation without addressing data quality and pipeline integrity first is the engineering equivalent of building on sand.

Teams that build AI systems that hold in production spend a disproportionate amount of time upstream, on data contracts between systems, on validation logic that catches drift before it reaches the model, on logging that makes the data layer auditable when something breaks at 2am. This work rarely makes it into product announcements. It absolutely makes it into production behavior when edge cases arrive at scale.

5. Systems Thinking Over Feature Thinking

The operational assumption behind most software development is stability: what a system does at launch is what it does six months later.

That assumption fails in AI-first systems. What the system does Tuesday can differ materially from what it does Friday if a third-party model received an update, if data distribution shifted, or if a dependent service changed behavior within an existing API version. The system is not broken by conventional definitions. It is responding to a changed environment. If the architecture was not built to detect and handle that, users find out first.

This demands engineering teams who reason about failure modes as rigorously as they reason about capabilities, who treat architecture reviews as exercises in interrogating assumptions rather than validating designs, and who consider operational stability a first-class engineering concern from day one. That maturity does not come from better tooling. It comes from how teams are built and what they are asked to prioritize.

AI-first architecture does not fail because the models are not good enough. It fails because the systems built around those models were not designed to hold.

AI Is Not the Risk. Underestimating It Is.

The technology is genuinely transformative. The models available today are capable of things that were not computationally feasible three years ago. The enterprises that learn to build around them with discipline will hold an advantage that compounds over time.

The five demands above are not obstacles to AI adoption. They are the work that makes adoption worth it. So, if your organization is moving AI from pilots to production, the question is not which model to use. It is whether the system being built around that model is designed to last.

Ask that question first. The rest follows.